The Physicality of a Prompt

The experience of generative AI is meticulously designed to feel ethereal. You type a query, a cursor blinks, and a sophisticated response appears in seconds. It feels weightless, but the physical cost of that digital magic is immense. To the user, a response from a model like Llama 3.1 is just text; to the grid, it is a burst of energy equivalent to running a microwave for up to eight seconds.

While a single ChatGPT query consumes roughly 0.3 watt-hours (Wh)—a fraction of the 20 Wh the average U.S. household pulls every minute—the cumulative scale is rewriting our infrastructure. We are moving from the “Software Era,” where code was the primary lever of power, to the “Infrastructure Era,” where electrons are the ultimate currency. This is no longer a conversation about algorithms; it is a conversation about the bedrock of our physical world.

The Thirst of the Machine: AI’s Hidden Water Footprint

Behind the silicon lies a staggering demand for fresh water. AI models are “thirsty” for two reasons: the massive cooling requirements of high-density data centers and the water-intensive manufacturing of the chips themselves. Producing a single semiconductor requires “ultra-pure” water—a process so intensive that it takes roughly 1.5 gallons of fresh water just to create one gallon of usable liquid. Each chip costs the planet roughly 8 to 10 gallons of water before it ever processes a single token.

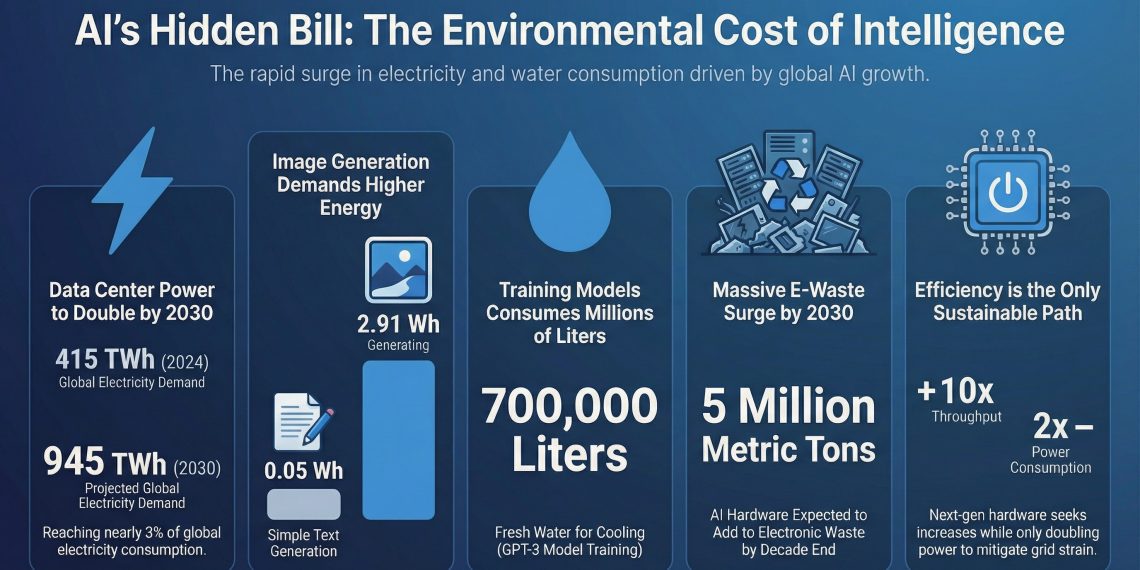

This consumption is increasingly a localized social crisis. Training GPT-3 reportedly consumed 700,000 liters of water, but the operational footprint is where the tension truly mounts. In desert regions like Phoenix, a single data center can consume 56 million gallons annually—equivalent to the footprint of 670 families. This has sparked a new era of environmental justice concerns, such as the xAI facility in Memphis, where a majority-Black community has challenged the facility over air and water sovereignty.

The Secret Water Cost of Conversation

Researchers estimate that a small set of 10 to 50 medium-length GPT-3 responses “drinks” approximately 500 mL of fresh water—roughly the size of a standard bottled water—depending on the climate of the data center’s location.

From Megawatts to Gigawatts: The Doubling of the Grid

We are witnessing a historic surge in energy demand. The International Energy Agency (IEA) projects that global data center electricity consumption will jump from ~415 TWh in 2024 to ~945 TWh by 2030. While this accounts for a seemingly modest 3% of global demand, the figure is deceptive. Data center consumption is growing at 15% annually—four times faster than any other sector.

The regional disparity is even more provocative. By 2030, U.S. per-capita data center consumption is expected to exceed 1,200 kWh. To put that in perspective, that single sector will represent roughly 10% of an average American household’s annual electricity use. Unlike electric vehicles, which distribute their load across the geography, data centers concentrate demand in specific “hubs,” making their integration into the grid a localized infrastructure nightmare.

The Efficiency Paradox: Huang’s Law and the Token Economy

The industry’s primary defense is efficiency. NVIDIA CEO Jensen Huang emphasizes that while throughput (the work done) increases 10x with each hardware generation, power consumption only increases by 2x. For the “AI Factory,” energy efficiency is not just a green initiative—it is the core of the business model. NVIDIA’s stated goal is to “generate 1 token for every dollar,” directly linking electron savings to revenue.

However, we are currently trapped in an “efficiency paradox.” While individual chips are getting better, the volume of “accelerated servers” is growing at 30% annually. As hardware becomes more efficient, the cost of AI drops, which only serves to trigger more demand. This insatiable hunger for “instant intelligence” is currently outpacing our ability to optimize the hardware, leading to a net increase in total energy consumption.

Zombie Infrastructure: Why AI is Reopening Nuclear Plants

Energy, not code, has become the primary bottleneck for technological leadership. This reality has pushed tech giants toward desperate measures, essentially raising “zombie infrastructure” from the dead. Microsoft’s landmark deal to reopen the Three Mile Island nuclear plant—rebranded as the Crane Clean Energy Center—is the clearest signal yet that the industry is hunting for carbon-free baseload power at any cost.

The crisis is most visible in Northern Virginia, the world’s data center capital. The wait time to connect a large-scale facility (over 100 MW) to the electrical grid has stretched to seven years. It is a physical stalemate: even if you have the billions to build the servers, the grid lacks the physical capacity to move the power. We are learning that the “cloud” is actually made of copper, steel, and high-voltage lines.

The Climate Duality: Arsonist or Firefighter?

AI is a double-edged sword in the climate fight. As a “firefighter,” it powers projects like Google’s Project Green Light to optimize traffic and reduce emissions, and the SLEAP tool to research carbon sequestration in plants. Yet, the “arsonist” side is increasingly difficult to ignore. AI is being used to accelerate fossil fuel discovery and drive hyper-consumption through personalized marketing.

Perhaps most damning is the impact on the existing transition. In Kansas City, West Virginia, and Salt Lake City, utilities have pushed back the retirement of coal-fired power plants by up to a decade specifically to meet the power surge from new data centers. While tech companies claim “Net Zero” through carbon offsets, the physical reality is that AI is keeping coal plants on life support.

“Comprehensive frameworks for evaluating the net climate impact of AI systems, accounting for both their energy costs and potential environmental benefits, have yet to be widely adopted, and current life-cycle assessments (LCAs) remain non-standardized.”

The Grid We Choose to Build

AI is a tool of the future, but it is currently being powered by the constraints of the past. Every prompt is a withdrawal from a finite bank of resources—water from stressed aquifers and electrons from a struggling grid. We are no longer just building software; we are building a global energy system designed to support a synthetic intellect.

The question for the next decade is not how fast AI can think, but how much the planet can afford to power its thoughts. As we race toward 2030, we must decide if the convenience of “instant intelligence” is worth the delayed retirement of a coal plant or the depletion of a local reservoir. The grid of the future is being built today; we must ensure it serves the stability of the planet as much as the speed of the processor.